Are You Searching for Ways to Build AI Labs for Startups Without Breaking the Bank?

AI Labs for Startups represent specialized environments where emerging companies can experiment with artificial intelligence technologies, develop machine learning models, and innovate without the prohibitive costs traditionally associated with AI development. These labs combine cloud computing resources, open-source frameworks, and strategic infrastructure planning to enable startups to compete with enterprise-level organizations while maintaining lean operational budgets.

The AI revolution is here, and it's moving faster than anyone anticipated. But here's the uncomfortable truth:

While tech giants pour billions into their AI infrastructure, startups face a critical challenge—how do you experiment, innovate, and compete when a single GPU cluster can cost more than your entire seed funding?

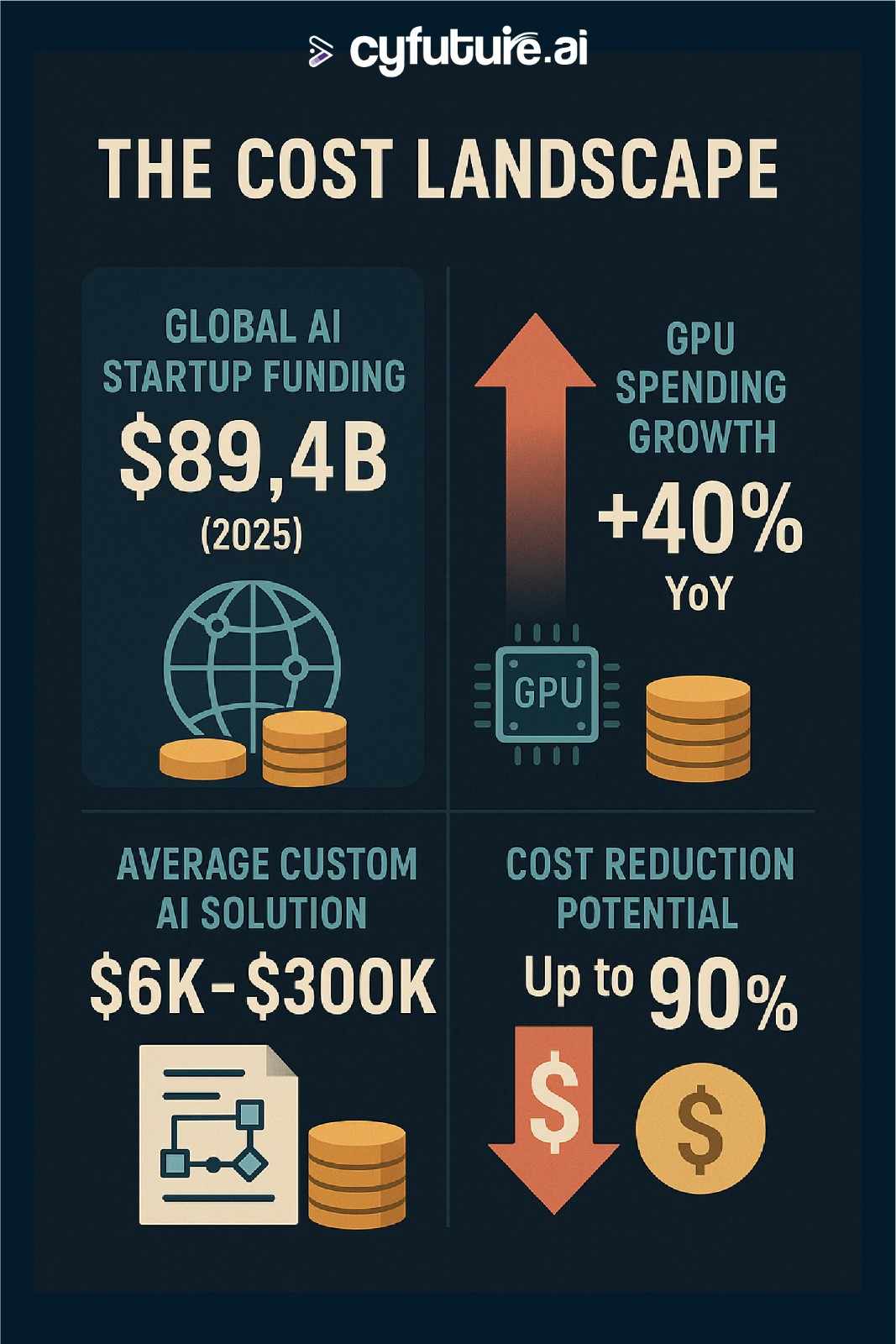

The numbers tell a compelling story. In 2025, AI startups attracted $89.4 billion in global venture capital, representing 34% of all VC investment. Yet, spending on GPU instances has grown by 40% as organizations experiment with AI. The paradox? Companies need to experiment to survive, but experimentation itself has become expensive.

Here's what makes this moment different: You don't need millions to start. The democratization of AI tools, the explosion of open-source frameworks, and innovative cloud pricing models have created unprecedented opportunities for resource-constrained startups.

What Are AI Labs for Startups?

AI Labs for Startups are dedicated experimental environments designed specifically for emerging companies to develop, test, and deploy artificial intelligence solutions without the massive infrastructure investments traditionally required. These labs leverage a combination of cloud-based GPU resources, IDE Lab as a Service, GPU rental options, H100 GPU instances, open-source machine learning frameworks, collaborative development tools, and cost-optimization strategies to level the playing field between startups and established tech giants.

Unlike traditional corporate R&D centers that demand millions in upfront capital, modern AI labs for startups operate on a pay-as-you-go model. They provide access to cutting-edge computational power, pre-trained models, and development frameworks that would otherwise be financially out of reach. This shift has fundamentally changed the startup landscape—small teams can now build sophisticated AI applications that compete directly with products from companies with thousand-employee engineering teams.

The concept extends beyond just hardware access. Modern AI labs encompass entire ecosystems that include experiment tracking tools, version control systems, collaborative notebooks, model registries, deployment pipelines, and cloud-based IDEs. Platforms like Cyfuture AI are pioneering this space by offering integrated AI infrastructure—combining IDE Lab as a Service, GPU rentals, and L40S-powered environments into cohesive, cost-effective solutions that help startups innovate faster and smarter.

The Real Cost of AI Experimentation: Breaking Down the Numbers

Let's talk about what AI experimentation actually costs in 2025—and why understanding these numbers is critical for startup survival.

GPU Infrastructure: The Elephant in the Room

Custom AI solutions can range from $6,000 to over $300,000, including development and rollout. But the ongoing costs are where things get interesting.

On Google Cloud, a single A100 GPU instance can cost over 15X more than a standard CPU instance. For startups running experiments, this adds up fast. However, the landscape has shifted dramatically. The most widely used GPU-based instances are also the least expensive, suggesting many companies are still in the experimentation phase.

Here's the breakdown:

- NVIDIA RTX 4090: Starting at $0.18/hour on providers like RunPod

- NVIDIA A100: From $0.40/hour on select cloud platforms

- NVIDIA H100: Approximately $2.39/hour for cutting-edge workloads

- NVIDIA L40S: From $0.57/hour for balanced performance

Compare this to 2023, when similar resources could cost 3-5X more per hour. The cost collapse has been dramatic.

The Hidden Costs Nobody Talks About

Beyond GPU rental fees, startups face several hidden expenses:

Model Storage Sprawl: Each abandoned AI experiment leaves storage liabilities. A single PyTorch model checkpoint averages 12GB. That means 100 failed experiments consume 1.2TB, costing approximately $275 per month indefinitely.

Cross-Regional Data Transfer: Processing European user data through U.S.-based AI models can incur $0.09/GB in transfer fees on AWS. For data-intensive applications, this accumulates quickly.

Shadow Costs: One SaaS company discovered $280,000 in monthly unaccounted cloud spend from 23 undocumented AI services. Marketing teams spinning up unauthorized AI image generators or HR using unvetted resume screeners create cost leakage that's difficult to track.

Container Waste: A striking 83% of container costs are associated with idle resources. About 54% of this wasted spend comes from cluster idle (overprovisioning infrastructure), while 29% stems from workload idle (oversized resource requests).

The Cost Collapse Revolution

Here's the game-changer: AI costs have shifted from prohibitive to negligible for many use cases.

DeepSeek's V3 model was created for just $5.6 million, yet performs competitively with OpenAI's GPT-4o models that cost around $20 billion to develop. Companies have shown that data generation costs can be cut by 90% by using existing models to create training examples.

OpenAI's GPT-4o-mini is 97% cheaper for input tokens and 96% cheaper for output tokens compared with GPT-4. This translates to a 97% decrease in the cost of AI intelligence.

<img src="https://i.ibb.co/svDQd57Z/ai-cost-landscape-info.jpg" alt="ai-cost-landscape-info" border="0"></a>

Strategic Approaches to Cost-Effective AI Experimentation

1. Embrace Open-Source Frameworks First

The open-source AI ecosystem has matured dramatically. In 2024, over 150 open-source AI startups innovated across various use cases, with more than 45% of deals being Series A+ rounds, indicating strong growth-stage investments.

Top Open-Source Tools for Startups:

TensorFlow (182,000+ GitHub stars): Google's end-to-end machine learning platform remains the gold standard for production deployments. Its versatility across CPUs, GPUs, and TPUs makes it ideal for startups planning to scale.

PyTorch: Preferred by 60% of AI research projects, PyTorch's dynamic computation graphs and Pythonic interface accelerate experimentation cycles. The thriving community provides extensive pre-built models and tutorials.

Ollama (135,000+ GitHub stars): The top open-source startup of 2024, Ollama enables running large language models like Meta's Llama and DeepSeek locally. This eliminates cloud API costs entirely for development and testing.

Hugging Face Transformers (124,000+ GitHub stars): Access 124,000 pre-trained models for NLP, computer vision, and multimodal tasks. This library has democratized access to state-of-the-art AI capabilities.

LangChain: The framework dominated 2023's ROSS Index for building LLM-centric applications. It simplifies the complex orchestration of AI agents, data retrieval, and model chaining.

Keras: The high-level API simplifies neural network development with an intuitive interface. Perfect for rapid prototyping and educational purposes.

These tools aren't just free—they're often superior to proprietary alternatives for experimentation. Meta's Llama and Mistral models now match GPT-4o performance on key benchmarks like MMLU, while remaining completely open-source.

2. Optimize Cloud GPU Usage

Smart startups don't just rent GPUs—they architect their experiments to minimize compute time.

Spot Instances and Preemptible VMs: Major cloud providers offer spot instances at 60-90% discounts compared to on-demand pricing. While these can be interrupted, they're perfect for:

- Model training that supports checkpointing

- Batch inference jobs

- Development and testing environments

- Non-time-critical experiments

Right-Sizing Your Compute: The most expensive mistake startups make is using H100 GPUs for tasks that run perfectly well on RTX 4090s or even CPU instances.

- Small model training/inference: RTX 4090 or L4 GPUs ($0.18-0.39/hour)

- Medium-scale fine-tuning: A100 GPUs ($0.40-0.80/hour)

- Large-scale training: H100 GPUs ($0.99-2.39/hour)

- Production inference: Optimized instances with auto-scaling

Arm-Based Instances: Organizations using Arm-based instances now spend 18% of their EC2 compute budget on them—twice as much as a year ago. These instances use up to 60% less energy than similar EC2s and often provide better performance at lower cost.

1-Click Clusters and Flexible Reservations: Platforms like Lambda Labs offer 1-Click Clusters with 16-1,536 NVIDIA GPUs, from 1 week to 3 years, with no commitment and no sales call required. This flexibility allows startups to scale resources precisely with project needs.

3. Leverage Transfer Learning and Pre-Trained Models

Why train from scratch when giants have already done the heavy lifting?

Transfer learning has become the secret weapon of cost-conscious startups. Instead of training models on millions of images or billions of tokens, startups can:

- Start with Foundation Models: Use pre-trained models from Hugging Face, OpenAI, or Anthropic as starting points

- Fine-Tune on Domain Data: Customize these models with just thousands (not millions) of domain-specific examples

- Deploy Specialized Solutions: Create industry-specific AI applications at a fraction of traditional development costs

This approach can reduce training costs by 85-95% while achieving comparable or superior results for specific use cases.

AI21 Labs offers versatile solutions through its Jurassic 2 models, enabling affordable custom business app development. These models provide a middle ground between expensive custom development and generic pre-trained models.

4. Implement Efficient MLOps Practices

The difference between a $10,000 monthly cloud bill and a $100,000 bill often comes down to operational efficiency.

Experiment Tracking: Use MLflow (open-source) to track experiments, package code into reproducible runs, and manage model deployment. This prevents duplicate work and identifies cost-effective approaches quickly.

Model Versioning: Implement proper version control for models, data, and experiments. DVC (Data Version Control) and Weights & Biases provide free tiers sufficient for early-stage startups.

Automated Scaling: Configure auto-scaling policies that spin down resources during idle periods. Many startups waste 50-70% of their cloud budget on resources running 24/7 that are only actively used 6-8 hours daily.

Monitoring and Optimization: CloudZero and similar FinOps platforms help optimize cloud costs without sacrificing performance. Innovative brands like Klaviyo and Coinbase use CloudZero to manage over $5 billion in cloud spend, with Upstart saving $20 million through cost optimization.

5. Adopt the "Compound AI Systems" Approach

2024 saw the emergence of "compound AI systems"—architectures that combine multiple AI components rather than relying on a single large model.

This approach offers several cost advantages:

- Use smaller, cheaper models for routine tasks

- Reserve expensive models for complex queries requiring advanced reasoning

- Implement caching layers to avoid redundant API calls

- Build retrieval-augmented generation (RAG) systems that supplement smaller models with external knowledge

Companies implementing compound AI systems report 40-60% cost reductions compared to monolithic large model architectures, while maintaining or improving output quality.

Real-World Success Stories: Startups Winning on Limited Budgets

The $50,000 MVP That Competed With Giants

One healthcare AI startup built their initial proof-of-concept for under $50,000—the minimum cost for an AI solution MVP. Their strategy:

- Used Ollama to run Llama 2 locally for development ($0 cloud costs)

- Leveraged transfer learning from BioBERT for medical text understanding

- Deployed on Lambda Labs' spot instances for production testing

- Implemented aggressive caching to minimize inference costs

Result: They secured Series A funding before exceeding $150,000 in total technical spend.

The Open-Source Advantage

Zed Industries went open source in January 2024 and gained more than 52,000 GitHub stars through the year. By building their AI-enabled code editor on open foundations, they:

- Attracted community contributions that would have cost millions in development

- Built credibility faster than closed-source competitors

- Reduced infrastructure costs through distributed development

- Created a sustainable moat through ecosystem effects

The DeepSeek Phenomenon

DeepSeek demonstrates what's possible with resource efficiency. Creating their V3 model for just $5.6 million (compared to OpenAI's $20 billion investment), they:

- Used distillation techniques to train smaller models from larger ones

- Operated on less powerful GPUs (H100 and H800) due to export restrictions

- Achieved competitive performance with open-source approaches

- Proved that throwing money at problems isn't the only path to AI excellence

<a href="https://cyfuture.cloud/join?p=3"><img src="https://i.ibb.co/yD9m0Bf/Unlock-AI-Experimentation-Power-for-Startups-cta.jpg" alt="Unlock-AI-Experimentation-Power-for-Startups-cta" border="0"></a>

Building Your AI Lab: A Practical Roadmap

Phase 1: Foundation (Months 1-3, Budget: $0-5,000)

Objective: Establish development environment and validate core concepts

Actions:

- Set up local development using Ollama and open-source models

- Experiment with Hugging Face Transformers for your use case

- Build proof-of-concept using free cloud credits (AWS, GCP, Azure all offer generous new customer credits)

- Validate market fit before significant infrastructure investment

Tools:

- Ollama for local LLM experimentation

- Google Colab or Kaggle Notebooks for initial GPU access (free)

- GitHub for version control

- Weights & Biases free tier for experiment tracking

Phase 2: Validation (Months 4-6, Budget: $5,000-20,000)

Objective: Prove technical feasibility and market demand

Actions:

- Migrate to cloud infrastructure with spot instances

- Implement proper MLOps practices

- Build minimum viable product (MVP)

- Conduct limited pilot testing with early customers

Tools:

- Lambda Labs or RunPod for cost-effective GPU access

- MLflow for experiment management

- FastAPI for model serving

- Docker for containerization

Cyfuture AI Advantage: At this stage, platforms like Cyfuture AI offer integrated solutions that combine infrastructure, monitoring, and deployment tools—reducing both complexity and costs compared to piecing together multiple services.

Phase 3: Scale (Months 7-12, Budget: $20,000-100,000)

Objective: Optimize for production and scale to initial customer base

Actions:

- Implement auto-scaling infrastructure

- Optimize models for inference efficiency

- Set up monitoring and alerting

- Establish cost allocation and tracking

Tools:

- Kubernetes for orchestration

- Production-grade cloud services with reserved instances

- FinOps tools for cost optimization

- Advanced monitoring solutions

Advanced Cost Optimization Techniques

Model Compression and Quantization

Reduce model size and inference costs by 75% without significant accuracy loss:

- Quantization: Convert model weights from 32-bit to 8-bit or 4-bit precision

- Pruning: Remove unnecessary neural network connections

- Knowledge Distillation: Train smaller models to replicate larger model behavior

Dynamic Model Selection

Implement intelligent routing that selects the right model for each query:

- Simple queries → Small, fast models (GPT-3.5 level)

- Complex tasks → Advanced models (GPT-4 level)

- Batch processing → Optimized for throughput

- Real-time requests → Optimized for latency

This approach can reduce costs by 50-70% while maintaining user experience.

Caching and Memoization

Implement aggressive caching strategies:

- Cache common query responses

- Store embeddings for frequently accessed content

- Memoize expensive computations

- Use CDNs for model artifacts

Companies report 30-50% cost reductions through intelligent caching alone.

Read More: https://cyfuture.ai/blog/ai-lab-as-a-service-india

Common Pitfalls to Avoid

Over-Engineering Too Early

The biggest mistake AI startups make? Building infrastructure for scale before validating product-market fit.

Gartner predicts 30% of generative AI projects will be abandoned after proof-of-concept by end of 2025. Don't be in that 30% because you spent all your resources on infrastructure instead of customer validation.

Ignoring Total Cost of Ownership

Consider the full cost stack:

- Compute costs (obvious)

- Data storage and transfer (often overlooked)

- Engineering time (your most expensive resource)

- Opportunity cost (what else could you build with those resources?)

Vendor Lock-In

Many AI models are trained on proprietary GPU architectures, making them non-portable across cloud providers. This reduces flexibility and increases long-term costs. Design for portability from day one.

Neglecting Data Quality

AI models trained on poor-quality data produce poor results, regardless of computational power. Investing in data quality early prevents expensive retraining cycles later.

Future-Proofing Your AI Lab Strategy

Trends Shaping 2025 and Beyond

The Continued Cost Collapse: AI costs are declining 40-50% annually. Build your strategy assuming computational resources will become cheaper, not more expensive.

Open Source Momentum: Open-source models have closed the performance gap with proprietary alternatives. The top 20 open-source startups of 2024 saw GitHub star growth exceeding 10,000%, with 11 of 20 focused on AI.

Serverless AI: Serverless GPU offerings from platforms like RunPod and Hyperstack eliminate idle costs entirely. Pay only for compute time actually used.

Agentic AI Systems: The shift from single models to systems of AI agents will reward architectural creativity over raw computational power.

Preparing for What's Next

Invest in Architecture, Not Just Infrastructure: The companies that win won't necessarily have the biggest models—they'll have the smartest systems architecture.

Build Distribution Advantages: As one founder noted, "Production costs are crashing while distribution stays just as hard to crack." Focus on go-to-market as much as technology.

Maintain Flexibility: The AI landscape changes monthly. Design systems that can adapt to new models, new pricing, and new capabilities without complete rewrites.

Contribute to Open Source: The startups succeeding in 2025 aren't just consuming open-source tools—they're contributing to them, building ecosystem advantages that go beyond technology.

Also Check: https://cyfuture.ai/blog/top-ai-lab-as-a-service-providers

Accelerate Your AI Journey With Smart Experimentation

The democratization of AI isn't coming—it's here.

In 2025, the barrier to AI innovation isn't technology or capital. It's strategy.

While 89% of Fortune 500 companies now have dedicated AI investment arms, and global AI startup funding reached $89.4 billion, the winners won't be determined by who spends the most. They'll be the teams that experiment smartest, iterate fastest, and deliver real value to customers.

The startups surviving beyond 2025 aren't building with unlimited resources—they're building with unlimited creativity. They're leveraging the 90% cost reduction in data preparation, the 97% decrease in model costs, and the explosion of open-source tools to compete with tech giants.

Your next steps:

- Start experimenting today using Ollama and open-source frameworks—no cloud costs required

- Validate your approach with minimal infrastructure before scaling

- Implement smart cost controls from day one to maximize runway

- Leverage specialized platforms like Cyfuture AI to reduce operational complexity

- Focus on distribution as much as technology—the best model means nothing without users

The AI revolution rewards those who move fast and optimize wisely. Your competitors aren't hesitating—they're experimenting right now.

Transform your AI strategy with Cyfuture AI and join the startups proving that smart experimentation beats massive budgets. Because in 2025, the question isn't whether you can afford to experiment with AI—it's whether you can afford not to.

Frequently Asked Questions:

1. What is an AI lab for startups?

An AI lab is a dedicated environment or platform where startups can experiment with AI tools, models, and data to build and test innovative solutions without investing heavily in infrastructure.

2. How do AI labs help reduce startup costs?

AI labs provide access to pre-trained models, cloud resources, and automation tools, allowing startups to test and deploy ideas without paying for full-scale development or hardware.

3. Can a small startup build its own AI lab?

Yes. With cloud-based platforms like Google AI, Microsoft Azure AI, and AWS AI, even small startups can create virtual AI labs with minimal upfront investment.

4. What kind of projects can startups run in AI labs?

Startups can develop prototypes, train AI models, test automation workflows, analyze data, and build predictive applications within AI lab environments.

5. Are AI labs suitable for non-technical founders?

Yes. Many AI labs now offer no-code or low-code interfaces, making it easier for non-technical founders to experiment, validate ideas, and collaborate with developers.

Author Bio:

Meghali is a tech-savvy content writer with expertise in AI, Cloud Computing, App Development, and Emerging Technologies. She excels at translating complex technical concepts into clear, engaging, and actionable content for developers, businesses, and tech enthusiasts. Meghali is passionate about helping readers stay informed and make the most of cutting-edge digital solutions.