Introduction: Are You Navigating the AI Research Landscape Without a Compass?

The artificial intelligence research ecosystem has undergone a seismic transformation. Corporate AI labs and academic AI labs now represent two fundamentally different paradigms for innovation—each with distinct advantages, resource allocations, and strategic value propositions that can make or break your AI initiatives.

With U.S. private AI investment hitting $109 billion in 2024 alone and academic institutions producing 67% of AI researchers, understanding these differences isn't just academic—it's mission-critical for any organization looking to leverage AI effectively.

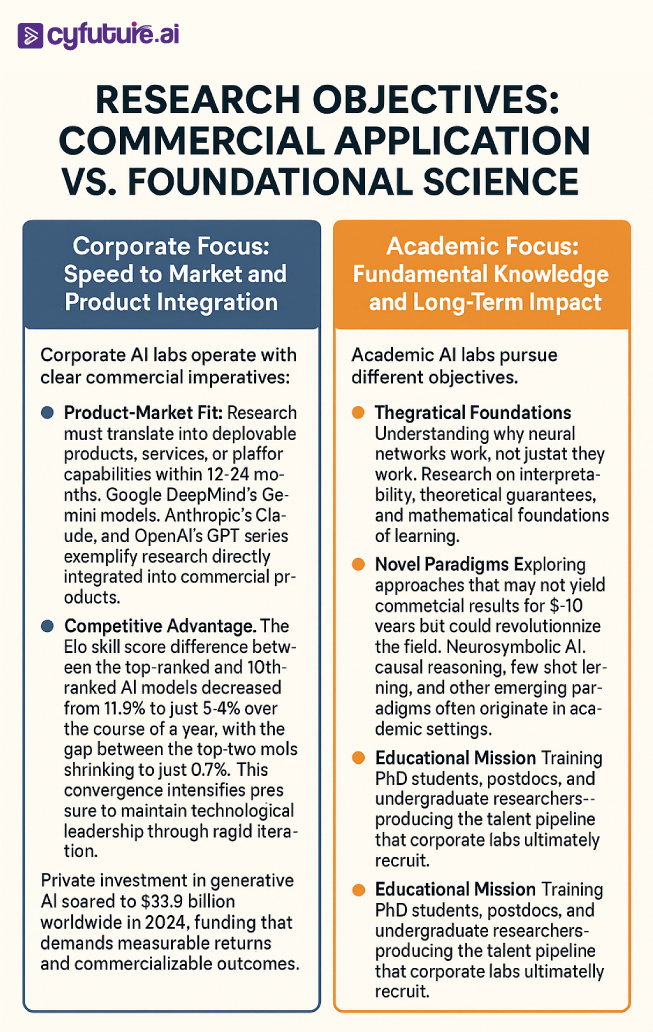

Here's the reality:

Corporate AI labs operate with unprecedented computational resources. Meta deployed 24,000 NVIDIA H100 GPUs to train Llama 3, while OpenAI announced a partnership with NVIDIA for 10 gigawatts of compute infrastructure—the largest AI infrastructure deployment in history. Meanwhile, academic labs struggle with Stanford HAI offering seed grants of just $75,000 for year-long projects.

The divergence is stark, the implications profound.

What Are Corporate AI Labs and Academic AI Labs?

Corporate AI labs are industry-funded research divisions within technology companies, focused on developing AI technologies that can be commercialized and deployed at scale. These labs—including Google DeepMind, OpenAI, Anthropic, Meta AI Research, and Microsoft Research—operate with massive budgets, cutting-edge hardware infrastructure, and direct pathways from research to product deployment.

Academic AI labs are university-affiliated research institutions dedicated to fundamental AI research, education, and open scientific inquiry. Stanford AI Lab (SAIL), MIT's Computer Science and Artificial Intelligence Laboratory (CSAIL), UC Berkeley's AI Research Lab, and Carnegie Mellon's AI department exemplify this model, emphasizing theoretical breakthroughs, peer-reviewed publications, and training the next generation of AI researchers.

The fundamental distinction?

Corporate labs bridge the gap between academic discovery and commercial application, while academic institutions prioritize rigorous inquiry and foundational knowledge creation.

The Resource Gap: Computing Power and Infrastructure Investment

Corporate Advantage in Computational Resources

The computational divide between corporate and academic AI labs has reached unprecedented levels.

By the end of 2024, Meta's infrastructure build-out included 350,000 NVIDIA H100 GPUs as part of a portfolio featuring compute power equivalent to nearly 600,000 H100s. This represents infrastructure that academic institutions simply cannot match.

Consider these staggering figures:

- NVIDIA and OpenAI announced a partnership to deploy at least 10 gigawatts of NVIDIA systems, with NVIDIA intending to invest up to $100 billion in OpenAI progressively

- Microsoft committed an $80 billion investment in AI-specific facilities, while Meta announced a $65 billion investment for 2025

- Some AI companies spend as much as 80% of their capital on computing resources such as graphics processing units

The academic response?

Stanford's HAI seed grants max out at $75,000 per project, with approximately 25 grants awarded annually. The National Science Foundation and NIH provide larger grants, but even multi-million dollar awards pale in comparison to the billions flowing into corporate labs.

"Without sufficient computational resources, we have to choose between impactful use cases—either researching a cancer cure or offering free education. No one wants to make that choice. The answer is much more capacity to serve the massive need and opportunity." — Sam Altman, OpenAI CEO

The Academic Infrastructure Reality

Academic institutions face fundamental constraints:

Limited GPU Access: Most academic labs operate with dozens or hundreds of GPUs, not hundreds of thousands. Research projects compete for shared computing resources across departments.

Power and Cooling Limitations: AI facilities require 50-150kW per rack versus 10-15kW for traditional computing, infrastructure upgrades that exceed most university capital budgets.

Hardware Procurement Delays: GPU supply constraints continue to impact AI project deployments, with Goldman Sachs analysts noting constraints will persist until at least mid-2025.

Yet this constraint breeds creativity:

Academic researchers develop more efficient algorithms, novel training techniques, and theoretical frameworks that don't require massive computational resources. In 2022, the smallest model registering a score higher than 60% on MMLU was PaLM with 540 billion parameters; by 2024, Microsoft's Phi-3-mini achieved the same threshold with just 3.8 billion parameters—a 142-fold reduction.

Among 475 survey respondents, 67% reported academia as their main affiliation, followed by corporate research environments, highlighting academia's continued role as the primary research training ground.

Talent Dynamics: Retention, Recruitment, and Career Trajectories

The Corporate Talent Magnet

Corporate labs have fundamentally reshaped AI talent markets:

Compensation Differential: Senior AI researchers at leading corporate labs command $500,000-$2,000,000+ annual compensation packages—5-10x typical academic faculty salaries. Even early-career researchers receive packages that academic institutions cannot match.

Retention Leadership: Anthropic demonstrated 80% employee retention rates during 2023-2024, with Google, Meta, Microsoft, Amazon, and Stripe serving as primary talent pools for AI labs.

Resource Access: "I can run experiments with 10,000 GPUs overnight" versus "I submitted a computing cluster allocation request three months ago" represents the daily reality difference for researchers.

Impact Scale: Deploying AI models to billions of users versus publishing papers read by thousands creates fundamentally different career satisfaction profiles.

Yet academia retains critical advantages:

Research Freedom: No pressure for quarterly results or product launches. Pure intellectual curiosity can drive research directions.

Publication Without Restriction: Academic researchers can publish findings immediately, while corporate researchers often face months-long review processes balancing scientific contribution against competitive advantage.

Institutional Prestige: A tenured position at Stanford, MIT, or Carnegie Mellon carries prestige that corporate titles cannot fully replicate.

The Academic Brain Drain Challenge

The statistics tell a concerning story:

New graduate hiring in Big Tech dropped 25% from 2023 and over 50% from pre-pandemic 2019 levels, with new grads accounting for just 7% of hires. Corporate labs increasingly recruit experienced researchers, not fresh PhDs.

Mid-career faculty face constant recruitment pressure. A single corporate offer can trigger competitive bidding that disrupts entire departments.

The result?

Universities invest heavily in training researchers through PhD programs, only to lose top talent to corporate labs immediately after graduation or after a few years of assistant professorship.

Publication Strategies and Knowledge Sharing

Corporate Selectivity vs. Academic Openness

Anthropic emphasizes research on LLM interpretability but generally publishes analyses on its Research website rather than in a conventional academic paper format. This exemplifies corporate labs' selective approach to knowledge sharing.

Corporate Publication Patterns:

- Strategic Timing: Papers released when competitive advantage has diminished or when recruiting/PR benefits outweigh information disclosure risks

- Partial Disclosure: Architectural details shared while training data, infrastructure specifics, and optimization techniques remain proprietary

- Internal-First Distribution: Research often deployed in products months before public disclosure

- Alternative Venues: Blog posts, technical reports, and GitHub repositories rather than peer-reviewed conferences

Academic Publication Imperatives:

- Peer Review Requirement: Faculty advancement, grant funding, and institutional prestige depend on peer-reviewed publications

- Complete Methodologies: Academic papers typically provide sufficient detail for reproducibility

- Preprint Culture: arXiv posts ensure rapid dissemination even before conference acceptance

- Open Source Commitment: Academic labs more likely to release code, datasets, and models

The tension is real:

Companies need to protect competitive advantages. Academics need to share knowledge freely. The result? A hybrid landscape where some breakthrough research remains proprietary for years while other advances rapidly diffuse through open publication.

Innovation Speed: Rapid Iteration vs. Rigorous Validation

Corporate Velocity Advantages

149 new foundation models were released in 2023, more than doubling 2022's count. This acceleration reflects corporate labs' ability to iterate rapidly.

Factors Enabling Speed:

- No Grant Cycles: Ideas can go from concept to full-scale experiment in days, not months of proposal writing

- Continuous Computing Access: No waiting for allocation approvals or shared cluster availability

- Larger Teams: 50-200 person research groups can parallelize experimentation across multiple approaches simultaneously

- Direct Deployment Pathways: Research prototypes can become product features within sprints

Academic Rigor Advantages

Speed isn't everything:

Thorough Validation: Academic peer review catches errors, validates claims, and ensures reproducibility in ways that corporate blog posts cannot.

Theoretical Understanding: Rather than empirically discovering "what works," academic research often explains why methods work, enabling principled improvements.

Long-Term Perspective: Freedom to pursue research that may not yield results for years allows exploration of fundamental questions that corporate quarterly pressures prohibit.

Negative Results Publication: Academic incentives allow publication of "this didn't work" findings that help the community avoid dead ends—information corporate labs rarely share.

Consider this perspective from Reddit's r/MachineLearning community:

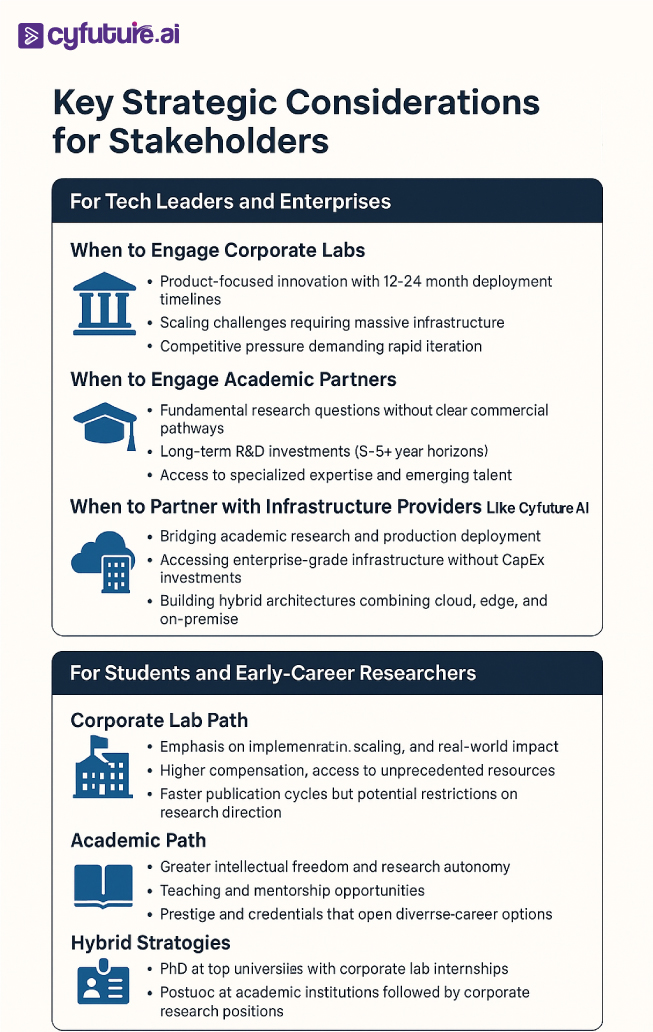

Collaboration Models: Bridging the Corporate-Academic Divide

Despite fundamental differences, productive collaboration models are emerging:

Sponsored Research Programs

- Google, Microsoft, Amazon, and Meta fund academic research through grants, providing computing credits and financial support

- Stanford HAI, MIT-IBM Watson AI Lab, and Berkeley's RISELab exemplify structured corporate-academic partnerships

- These arrangements typically allow academic publication while giving corporate sponsors early insight into findings

Talent Exchange Programs

- Visiting researcher positions allow academics temporary corporate lab access

- Corporate researchers sometimes hold adjunct faculty positions, teaching while maintaining industry roles

- PhD internships at corporate labs provide students with large-scale infrastructure experience

Open Source Ecosystems

- PyTorch (Meta), TensorFlow (Google), and JAX (Google) as foundational frameworks

- Corporate labs releasing pre-trained models (Llama, Gemini, Claude) that academic researchers can fine-tune

- Shared benchmarks and evaluation frameworks enabling comparable research

The Cyfuture AI Advantage

At Cyfuture AI, we recognize that the future of AI research lies not in choosing between corporate and academic approaches, but in synthesizing their complementary strengths. Our infrastructure solutions provide academic institutions with enterprise-grade GPU access and computational resources, while our consulting services help corporations adopt the rigorous validation and theoretical grounding that characterize academic research.

With deployment capabilities spanning cloud, edge, and hybrid environments, Cyfuture AI bridges the resource gap that traditionally separates academic ambition from corporate-scale execution.

The Global AI Research Landscape: Geographic and Geopolitical Dimensions

U.S. Dominance with Chinese Convergence

U.S.-based institutions produced 40 notable AI models in 2024, compared to China's 15 and Europe's three. However, the performance gap is narrowing rapidly.

Performance differences on major benchmarks such as MMLU and HumanEval shrank from double digits in 2023 to near parity in 2024. Chinese models like DeepSeek are demonstrating that efficiency innovations can offset raw computational advantages.

Investment Disparities:

U.S. private AI investment hit $109 billion in 2024, nearly 12 times higher than China's $9.3 billion and 24 times the UK's $4.5 billion. This massive capital advantage funds both corporate lab expansion and academic research programs.

European Challenges:

European AI research faces structural disadvantages:

- Fragmented national funding systems

- GDPR and regulatory constraints on data access

- Limited venture capital compared to U.S. and China

- Brain drain to better-funded U.S. corporate labs

Yet Europe maintains strengths in AI ethics, regulation, and human-centered design—areas where thoughtful academic research increasingly influences global AI development.

The Future Convergence: Hybrid Models and Emerging Paradigms

Breaking Down False Dichotomies

The corporate-vs-academic framing increasingly oversimplifies reality:

Corporate Labs Embracing Academic Values:

- Anthropic's constitutional AI research prioritizes safety over speed-to-market

- Google DeepMind maintains strong publication records and academic collaborations

- Demis Hassabis won the Nobel Prize in Chemistry in 2024 for protein structure prediction work at DeepMind

Academic Labs Adopting Corporate Practices:

- Stanford's HAI increasingly focuses on deployable, policy-relevant research

- University spin-offs blur lines between academic research and commercial application

- Grant proposals now emphasize "broader impact" and practical deployment pathways

Cyfuture AI's Role in the Evolving Landscape

The convergence creates new opportunities for infrastructure providers and AI consultancies. Cyfuture AI's unique positioning enables:

For Academic Institutions:

- Enterprise-grade infrastructure at academic budgets

- GPU-as-a-Service models providing elastic computational capacity

- DevOps and MLOps support allowing researchers to focus on science, not system administration

For Corporate Labs:

- Hybrid cloud architectures balancing performance, cost, and compliance

- Edge AI deployment for low-latency inference at scale

- Security and governance frameworks meeting enterprise requirements

The future isn't corporate or academic—it's intelligently designed collaborations enabled by flexible infrastructure.

Accelerate Your AI Innovation with Cyfuture AI

The corporate-vs-academic divide doesn't have to constrain your AI ambitions.

Whether you're an academic institution seeking to punch above your computational weight class, or an enterprise demanding the rigor and validation that characterizes world-class research, Cyfuture AI delivers infrastructure and expertise tailored to your unique requirements.

Our solutions include:

- Scalable GPU Infrastructure: From single-instance development to multi-node training clusters

- Hybrid Deployment Architectures: Seamlessly spanning cloud, edge, and on-premise environments

- MLOps and Research Engineering: Letting your team focus on innovation, not infrastructure management

- Governance and Compliance Frameworks: Meeting enterprise security and regulatory requirements without sacrificing research agility

78% of organizations reported using AI in at least one business function in 2024, jumping from 55% in 2023. The AI revolution is accelerating, and infrastructure capabilities increasingly separate leaders from laggards.

Transform your AI capabilities with infrastructure that scales from research prototype to production deployment—without compromising on performance, security, or cost-efficiency.

Contact Cyfuture AI today to discover how our solutions bridge the corporate-academic divide and accelerate your AI innovation timeline.

FAQs

1. What are the main differences between corporate and academic AI labs?

Corporate AI labs prioritize commercial applications, rapid iteration, and product deployment with massive computational resources ($80-100 billion investments). Academic AI labs emphasize fundamental research, theoretical understanding, peer-reviewed publication, and training researchers, typically operating with grants of $50,000-$5,000,000.

2. Which produces better AI research—corporate or academic labs?

Neither is objectively "better"—they excel at different objectives. Corporate labs lead in scaling existing techniques, engineering optimizations, and deploying AI at internet scale. Academic labs lead in novel theoretical frameworks, interpretability research, and exploring paradigms without immediate commercial viability. Breakthroughs often require both: academic insight refined through corporate engineering.

3. How much computational advantage do corporate labs have?

The gap is enormous. Meta operates 350,000-600,000 H100-equivalent GPUs, while typical academic labs access dozens to hundreds. The cost of equivalent inference dropped 280-fold in two years, but absolute compute access remains orders of magnitude higher in corporate settings.

4. Can academic researchers access corporate-level computational resources?

Partially. Cloud providers offer research credits; corporate sponsored programs provide infrastructure access; and companies like Cyfuture AI offer GPU-as-a-Service at academic pricing. However, sustained access to hundreds of thousands of GPUs remains exclusively corporate territory.

5. Why don't corporate labs publish all their research?

Competitive advantage concerns, intellectual property protection, and strategic timing drive selective publication. Companies share research when recruiting/PR benefits exceed information disclosure risks, or when competitive advantages have diminished. Some research never publishes due to proprietary commercial value.

6. How are corporate labs affecting academic AI research?

More AI researchers work in corporate environments where necessary hardware and resources are more easily available, questioning academic AI research roles, student retention, and faculty recruiting. Academic labs face brain drain, struggle to compete for talent, and increasingly partner with corporate labs for computational access.

7. What role does Cyfuture AI play in this landscape?

Cyfuture AI bridges the corporate-academic divide by providing enterprise-grade infrastructure at accessible price points, enabling academic researchers to conduct experiments requiring significant computational resources while helping corporations adopt rigorous validation and governance frameworks. Our hybrid cloud, edge, and on-premise solutions serve both research and production deployment needs.

8. Which AI labs are leading in 2025-2026?

U.S.-based institutions produced 40 notable AI models in 2025, with Google DeepMind, OpenAI, Anthropic, and Meta leading corporate labs. Stanford HAI, MIT CSAIL, UC Berkeley, and Carnegie Mellon lead academic institutions. Chinese labs like DeepSeek demonstrate rapid capability growth despite smaller budgets.

9. How does geographic location affect AI lab capabilities?

U.S. private AI investment ($109 billion) vastly exceeds China ($9.3 billion) and UK ($4.5 billion). Silicon Valley proximity provides corporate labs with talent access, venture capital, and infrastructure advantages. Academic labs benefit from clustering—Stanford's location near major tech companies creates unique collaboration opportunities unavailable to geographically isolated institutions.

Author Bio:

Meghali is a tech-savvy content writer with expertise in AI, Cloud Computing, App Development, and Emerging Technologies. She excels at translating complex technical concepts into clear, engaging, and actionable content for developers, businesses, and tech enthusiasts. Meghali is passionate about helping readers stay informed and make the most of cutting-edge digital solutions.