The NVIDIA H200 Tensor Core GPU is one of the most powerful AI accelerators available globally in 2026. Designed for large language models (LLMs), generative AI, deep learning, and HPC workloads, the H200 builds on NVIDIA’s Hopper architecture and pushes memory capacity and bandwidth to new limits.

For Indian enterprises, startups, and research institutions, the most common questions are:

- How much does the NVIDIA H200 GPU cost in India?

- Is it better to buy the GPU or rent it on the cloud?

- What kind of performance uplift does H200 offer over previous GPUs like A100 or H100?

This expanded guide answers all of those questions, aligning Indian pricing and availability with insights from leading cloud providers such as Cyfuture AI, while also reflecting enterprise buyer realities in 2026.

What is NVIDIA H200 and Why It Matters?

The NVIDIA H200 is an evolution of the H100, optimized specifically for memory-intensive AI workloads. While raw compute is important, modern AI models are increasingly bottlenecked by memory size and bandwidth rather than just FLOPS.

The H200 addresses this problem directly by introducing HBM3e memory, enabling significantly faster data access and reducing the need for model sharding across multiple GPUs.

Key Use Cases

- Training and inference of large language models (GPT-scale and beyond)

- Retrieval-augmented generation (RAG) workloads

- Scientific simulations and HPC

- AI inference at scale with long context windows

In practical terms, this means faster training times, lower latency inference, and better GPU utilization compared to earlier generations.

NVIDIA H200 GPU Price in India (2026): What You Need to Budget

Let's talk numbers.

Pricing for the NVIDIA H200 GPU in India varies significantly based on configuration and supplier, with estimates ranging from ₹25 lakhs to ₹35 lakhs per unit for the PCIe variant. The SXM variant, designed for high-density server deployments, commands a premium due to its enhanced thermal design and interconnect capabilities.

Price Breakdown by Configuration:

- PCIe H200 (141GB HBM3e): ₹25-28 lakhs

- SXM H200 (141GB HBM3e): ₹32-35 lakhs

- H200 NVL (Dual-GPU configuration): Custom enterprise pricing

Why such a premium?

The answer lies in the revolutionary memory subsystem and the global demand-supply dynamics. With leading cloud providers like AWS, Google Cloud, and Microsoft Azure deploying H200 instances throughout 2025, enterprise demand has created allocation constraints that extend into 2026.

Here's what you should consider:

Total Cost of Ownership (TCO) extends beyond the GPU purchase price. Factor in:

- Server infrastructure costs (₹8-15 lakhs additional)

- Cooling and power infrastructure upgrades

- Software licensing for AI frameworks

- Ongoing operational expenses

NVIDIA H200 Cloud Pricing in India

Because of the high upfront cost of ownership, cloud access has become the most popular way to use H200 GPUs in India.

Indicative cloud pricing (India):

|

Pricing Model |

Approx Cost |

Best For |

|

On-demand |

~₹300/hour |

Short experiments, immediate access |

|

Spot / interruptible |

~₹88/hour |

Non-critical or batch workloads |

|

Reserved / committed |

Custom pricing |

Long-running production jobs |

NVIDIA H200 Cloud Pricing Comparison(India)

|

Provider |

On-Demand Price (₹/hr) |

Spot / Discounted (₹/hr) |

Notes |

|

Cyfuture AI |

~₹250–₹350 |

Custom / volume-based |

Enterprise-focused, private cloud options |

|

E2E Networks |

~₹300 |

~₹88 |

Strong price transparency, startup-friendly |

|

Global hyperscalers (India region) |

₹500–₹800+ |

Limited |

Higher cost, USD billing exposure |

|

Boutique AI clouds (India) |

₹200–₹400 |

Variable |

Smaller capacity, limited availability |

Buying vs Cloud: Which Option Makes Sense?

Buying NVIDIA H200 (On-Prem)

Pros

- Complete control over hardware

- No recurring hourly charges

- Ideal for continuous, predictable workloads

Cons

- Extremely high upfront capital cost

- Additional spend on servers, networking, cooling, and power

- Long procurement and deployment timelines

This option generally suits large enterprises, government labs, or hyperscale AI teams with long-term, always-on workloads.

Using NVIDIA H200 on the Cloud

Pros

- Zero capital expenditure

- Instant access to cutting-edge GPUs

- Easy scaling up or down

Cons

- Costs can add up for 24/7 usage

- Spot instances may be interrupted

Cloud usage is typically best for:

- Startups and SMEs

- AI research teams

- Burst workloads and experimentation

- Model training followed by optimized inference runs

NVIDIA H200 Specifications (Detailed Overview)

Now, let's get technical.

Core Architecture Specifications:

- GPU Architecture: NVIDIA Hopper

- CUDA Cores: 16,896

- Tensor Cores: 528 (4th Generation)

- Memory Capacity: 141GB HBM3e

- Memory Bandwidth: 4.8TB/s

- TDP: 700W (SXM), 350W (PCIe)

- Interconnect: NVLink 4.0 (900GB/s bidirectional)

- FP64 Performance: 67 TFLOPS

- FP32 Performance: 134 TFLOPS

- FP16/BF16 Performance: 1,979 TFLOPS

- INT8 Performance: 3,958 TOPS

What Makes These Numbers Matter?

The H200 delivers up to 1.6x better inference throughput for large language models compared to the H100, with particularly impressive gains in models exceeding 70 billion parameters. This performance leap stems from the expanded memory capacity, which reduces the need for model sharding across multiple GPUs.

The memory bandwidth of 4.8TB/s represents a 43% increase over the H100's 3.35TB/s. For memory-bound AI workloads-which includes most modern LLM inference-this translates directly to faster response times and higher throughput.

But here's the kicker:

The Transformer Engine with FP8 precision support enables efficient training and inference of transformer-based models, the backbone of GPT-4, Claude, and other generative AI systems.

Real-World Performance Benchmarks:

- GPT-3 175B Inference: 1.9x faster than A100, 1.4x faster than H100

- Llama 2 70B Inference: 1.8x faster than H100

- Stable Diffusion XL: 1.5x faster image generation

- Training Throughput (BERT-Large): 1.6x improvement over H100

- Scientific Computing (GROMACS): 2.1x faster molecular dynamics simulations

Read More: GPU Cloud Pricing Explained: Factors, Models, and Providers

Performance Analysis: How the H200 Transforms AI Workloads

Let's break down what this means for your applications.

Large Language Model Training:

Training a GPT-3 scale model (175B parameters) requires massive computational resources. The H200's 141GB memory allows larger batch sizes and reduced model parallelism requirements, accelerating training by up to 40% compared to H100 configurations.

For context:

- Training a 70B parameter model on 8x H100s: ~21 days

- Training the same model on 8x H200s: ~15 days

- Cost savings: ~30% reduction in training time and associated costs

Inference Optimization:

Here's where the H200 truly shines.

Inference represents 80-90% of total AI compute workloads in production environments. The H200's expanded memory enables:

- Reduced Model Quantization: Maintain FP16 precision instead of INT8, improving output quality

- Larger Context Windows: Support for 32K+ token contexts in LLMs without performance degradation

- Higher Concurrent Requests: Handle 2.5x more simultaneous inference requests per GPU

Scientific Computing Applications:

Beyond AI, the H200 excels in traditional HPC workloads:

Drug Discovery: Molecular dynamics simulations run 2.1x faster, accelerating virtual screening processes

Weather Forecasting: Climate models execute with 1.7x improved throughput

Genomics: DNA sequencing analysis completes 1.8x faster

Financial Modeling: Risk assessment calculations achieve 1.6x speedup

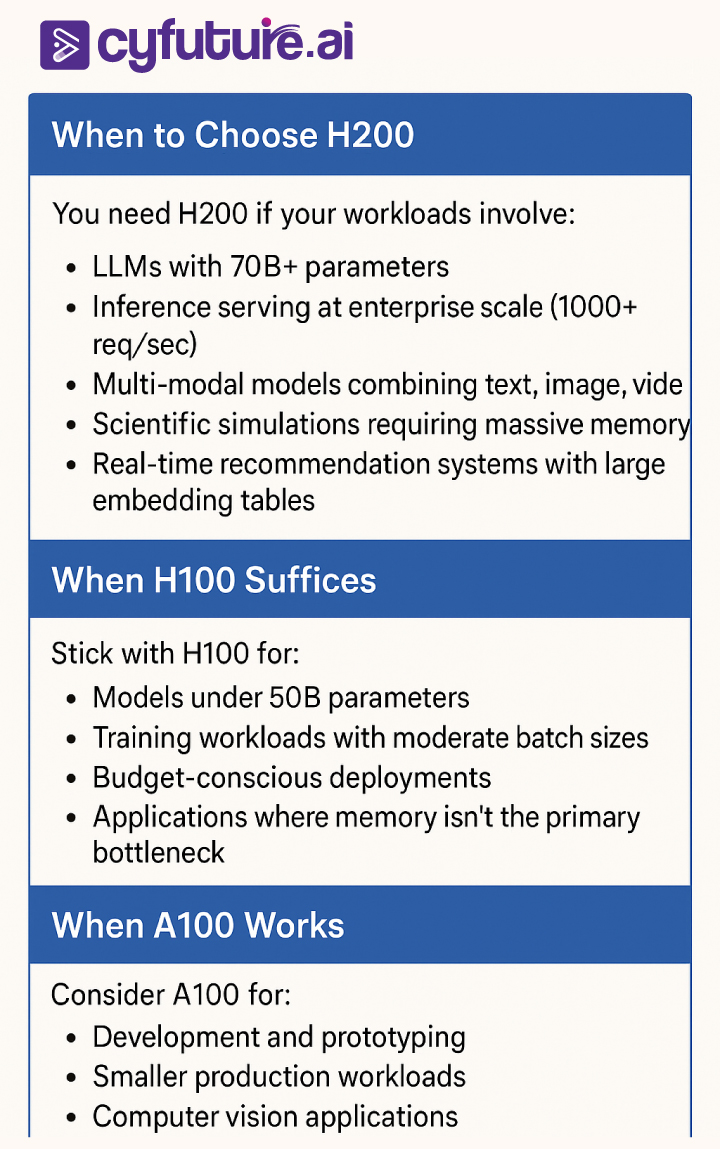

Comparing H200 vs H100 vs A100: Making the Right Choice

Not every workload demands an H200.

Here's the practical comparison:

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Power and Cooling Requirements: The Infrastructure Reality

Here's what nobody tells you upfront:

Deploying H200s requires significant infrastructure preparation.

Power Requirements:

Single H200 SXM: 700W TDP

8-GPU Server Configuration: 5,600W for GPUs alone

Total Server Power: 7,000-8,000W including CPUs, storage, networking

For perspective, that's equivalent to powering 70 typical household air conditioners simultaneously.

Cooling Infrastructure:

The H200's thermal output demands:

- Liquid cooling systems for high-density deployments

- Minimum 15-20 BTU/hour cooling capacity per GPU

- Hot aisle/cold aisle containment architecture

- Ambient temperature maintained at 18-27°C

Infrastructure Cost Estimates:

- Power distribution upgrades: ₹5-8 lakhs per rack

- Cooling system installation: ₹12-18 lakhs

- Environmental monitoring: ₹2-3 lakhs

Why does this matter?

Many enterprises underestimate these infrastructure costs, which can add 40-60% to the total deployment budget. Cyfuture AI’s managed GPU infrastructure eliminates these concerns, providing enterprise-grade cooling, redundant power, and 24/7 monitoring as part of the service.

Software Ecosystem and Optimization

Hardware alone doesn't deliver results.

The H200 requires optimized software stacks to achieve advertised performance:

Essential Software Components:

CUDA Toolkit: Version 12.0+ for H200 support

cuDNN: Version 8.9+ for neural network primitives

TensorRT: Version 8.6+ for inference optimization

NCCL: Latest version for multi-GPU communication

PyTorch: Version 2.1+ with H200 optimizations

TensorFlow: Version 2.14+ with Hopper support

Framework Performance Tips:

- Enable TF32 Precision: Automatic 5-10x speedup for compatible operations

- Leverage Tensor Cores: Use FP16/BF16 mixed precision training

- Optimize Memory Layout: Use NCHW format for CNNs, batch dimension optimization

- Pipeline Parallelism: Distribute large models across multiple H200s efficiently

- FlashAttention-2: Implement attention mechanism optimizations for transformers

Use Cases: Who Benefits Most from H200?

Let's get specific about applications.

Enterprise AI/ML Teams:

Use Case: Deploying GPT-4 class models for internal chatbots, code generation, and document analysis

Benefit: Reduced inference latency by 45%, supporting 3x more concurrent users

ROI Timeline: 8-12 months through infrastructure consolidation

Research Institutions:

Use Case: Training novel AI architectures with 100B+ parameters

Benefit: 35% faster training iterations, enabling more experimental cycles

ROI Timeline: Immediate through accelerated research output

Healthcare/Biotech:

Use Case: Drug discovery through molecular dynamics and protein folding simulations

Benefit: 2x faster simulation throughput, reducing discovery timelines

ROI Timeline: 18-24 months through accelerated drug candidates

Financial Services:

Use Case: Real-time fraud detection with complex neural networks

Benefit: Process 2.5x more transactions per second with lower latency

ROI Timeline: 6-9 months through fraud prevention and reduced infrastructure

Media/Entertainment:

Use Case: Generative AI for content creation, video upscaling, VFX rendering

Benefit: 50% faster rendering times, improved creative iteration cycles

ROI Timeline: 12-15 months through increased production capacity

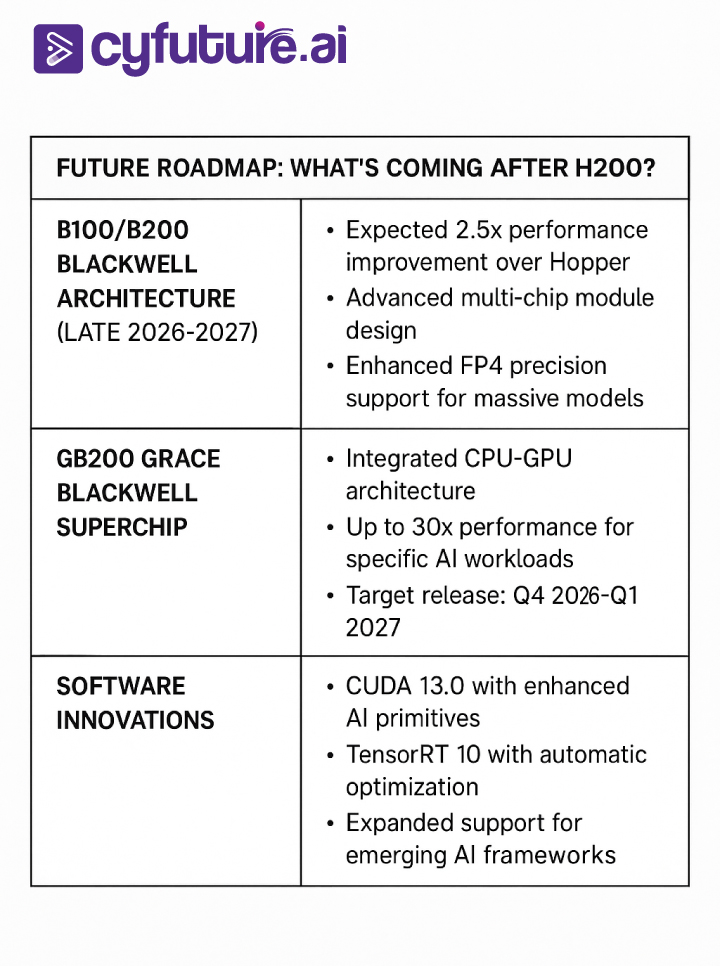

Future Roadmap: What's Coming After H200?

What does this mean for H200 buyers?

The H200 represents mature, production-ready technology with a solid 3-5 year useful lifecycle. The Hopper architecture's widespread adoption ensures robust software support and optimization continuing through 2028-2029.

Risk Mitigation: Addressing Common Concerns

Let's tackle the elephant in the room.

Supply Chain Risks:

Concern: Extended lead times and allocation uncertainty

Mitigation: Work with multiple partners, consider cloud alternatives, plan 6-9 months ahead

Obsolescence Risk:

Concern: New architectures making H200 outdated

Mitigation: H200 offers 3-5 year viable lifecycle, resale value remains strong for 2-3 years

Infrastructure Costs:

Concern: Hidden costs exceeding budget

Mitigation: Comprehensive infrastructure assessment before purchase, consider managed services

Vendor Lock-in:

Concern: Dependence on NVIDIA ecosystem

Mitigation: While NVIDIA dominates, CUDA and frameworks are industry-standard, enabling flexibility

Underutilization Risk:

Concern: GPU sitting idle, poor ROI

Mitigation: Conduct thorough workload analysis, consider cloud bursting, implement GPU sharing/scheduling

Accelerate Your AI Infrastructure with Cyfuture AI

Ready to harness the power of H200?

The decision to invest in cutting-edge GPU infrastructure isn't just about buying hardware—it's about accelerating your organization's AI capabilities while managing costs, complexity, and risk.

Also Check: Top 10 GPU Cluster Services for AI Training and Machine Learning in 2026

Cyfuture AI eliminates the traditional barriers to enterprise GPU adoption:

✓ Rapid Deployment: Access H200 infrastructure in 4-6 weeks vs. 12-16 weeks for direct purchase

✓ Zero Infrastructure Overhead: No power, cooling, or facility investments required

✓ Flexible Scaling: Scale from 1 to 100+ GPUs based on workload demands

✓ Expert Support: Dedicated AI infrastructure specialists for optimization

✓ Cost Predictability: Transparent pricing without hidden infrastructure costs

Whether you're training foundation models, deploying production inference at scale, or conducting cutting-edge AI research, Cyfuture AI’s H200-powered infrastructure delivers the performance you need with the flexibility you demand.

Don't let infrastructure constraints limit your AI ambitions.

Contact Cyfuture AI today to design your optimal H200 deployment strategy and transform your AI capabilities in 2026.

Frequently Asked Questions (FAQs)

1. What is the exact price of NVIDIA H200 GPU in India for 2026?

The NVIDIA H200 GPU pricing in India ranges from ₹25-28 lakhs for PCIe variants and ₹32-35 lakhs for SXM variants. Prices vary based on supplier, quantity ordered, and specific configuration. Enterprise volume purchases typically receive 8-12% discounts for orders of 8 or more units.

2. How does the H200 compare to the H100 in real-world performance?

The H200 delivers 1.4-1.6x faster inference for large language models compared to H100, primarily due to its 141GB memory capacity (vs. 80GB in H100) and 4.8TB/s bandwidth (vs. 3.35TB/s). For training workloads, improvements range from 1.3-1.5x depending on model architecture and batch size optimization.

3. When will H200 GPUs be readily available in India?

H200 availability in India follows a tiered schedule: Q1 2026 for major enterprise customers (12-16 week lead times), Q2 2026 for broader enterprise access (8-12 weeks), and Q3-Q4 2026 for general market availability (6-10 weeks). Cloud providers offer immediate access through on-demand instances.

4. What infrastructure requirements are necessary for deploying H200 GPUs?

An H200 SXM GPU requires 700W power per unit, adequate cooling capacity (15-20 BTU/hour per GPU), and proper server infrastructure supporting PCIe Gen5 or NVLink connectivity. A typical 8-GPU server needs 7,000-8,000W total power and enterprise-grade liquid cooling systems for optimal performance.

5. Can smaller organizations afford H200 GPUs or is it only for large enterprises?

While H200 GPUs represent significant investment, cloud-based access through providers like Cyfuture AI, AWS, Azure, and GCP makes this technology accessible to organizations of all sizes. Pay-as-you-go pricing models eliminate upfront capital costs, with hourly rates ranging from $15-30 depending on provider and reservation terms.

6. What software optimizations are needed to maximize H200 performance?

Maximizing H200 performance requires CUDA 12.0+, cuDNN 8.9+, TensorRT 8.6+, and framework versions supporting Hopper architecture (PyTorch 2.1+, TensorFlow 2.14+). Enable TF32 precision, implement mixed-precision training with FP16/BF16, optimize memory layouts, and leverage FlashAttention-2 for transformer models.

7. Should I buy H200 now or wait for the next generation Blackwell architecture?

H200 represents mature, production-ready technology with immediate availability and robust software ecosystem support. Blackwell architecture (B100/B200) won't reach widespread availability until late 2026-2027. If your workloads require expanded memory capacity now, H200 offers a solid 3-5 year lifecycle with strong resale value.

8. How does H200 pricing compare globally vs. India-specific pricing?

Global H200 pricing ranges from $30,000-40,000 USD (approximately ₹25-33 lakhs), with Indian pricing incorporating import duties (varies 10-20%), GST (18%), and distributor margins. India-specific pricing is competitive considering these factors, typically within 10-15% of landed costs in other markets.

9. What is the total cost of ownership (TCO) for H200 beyond the purchase price?

TCO includes GPU purchase (₹25-35L), server infrastructure (₹8-15L), power infrastructure upgrades (₹5-8L per rack), cooling systems (₹12-18L), networking (₹3-5L), ongoing power costs (~₹5-7L annually per 8-GPU server), and maintenance contracts (10-15% annually). Cloud alternatives eliminate upfront costs with predictable operational expenses.

Author Bio:

Meghali is a tech-savvy content writer with expertise in AI, Cloud Computing, App Development, and Emerging Technologies. She excels at translating complex technical concepts into clear, engaging, and actionable content for developers, businesses, and tech enthusiasts. Meghali is passionate about helping readers stay informed and make the most of cutting-edge digital solutions.