Are You Wondering Whether the H200 GPU Is Worth the Upgrade from H100?

The AI infrastructure landscape is evolving at breakneck speed, and NVIDIA's latest GPU offerings are at the center of this transformation. The H200 GPU, introduced as the successor to the industry-standard H100, promises revolutionary improvements in memory capacity and bandwidth—but does it justify the premium price tag?

With 141 GB of HBM3e memory at 4.8 TB/s bandwidth—nearly double the capacity of the H100 with 1.4X more memory bandwidth, the H200 represents a significant leap forward. However, whether this translates to real-world value for your specific AI workloads requires a deeper understanding of both GPUs' capabilities.

Here's the thing:

Your choice between these two powerhouses could mean the difference between seamless AI operations and frustrating bottlenecks that slow your innovation.

Let me walk you through everything you need to know.

What is the NVIDIA H200 GPU?

The NVIDIA H200 is the first GPU to offer 141 gigabytes (GB) of HBM3e memory at 4.8 terabytes per second (TB/s), built on the same proven Hopper architecture as the H100. This next-generation data center GPU is specifically engineered to accelerate generative AI, large language models (LLMs), and high-performance computing (HPC) workloads.

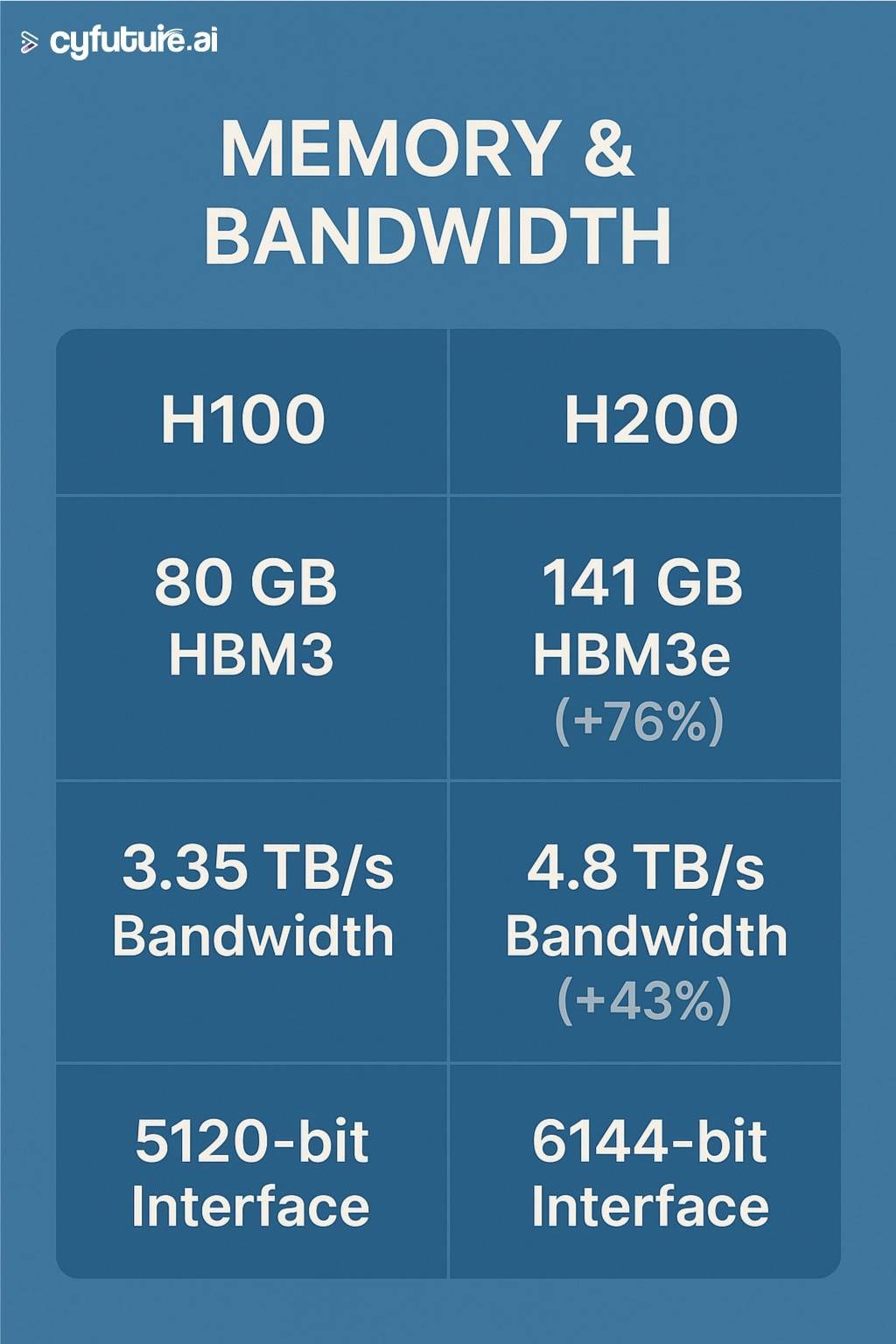

The H200 maintains the same compute architecture as the H100 but solves the critical memory bottleneck that has plagued AI developers working with increasingly large models. The H200 features 76% more memory (VRAM) than the H100 and a 43% higher memory bandwidth, making it particularly powerful for memory-intensive applications.

Core Technical Specifications: H200 vs H100

Understanding the technical differences is crucial for making an informed decision. Let's break down the specifications side by side:

Memory Architecture

The memory upgrade is the H200's defining feature. The H200 GPU has the same compute as an H100 GPU, but with 76% more GPU memory (VRAM) at a 43% higher memory bandwidth.

Compute Performance

Both GPUs share the same Hopper architecture foundation:

- CUDA Cores: 16,896 (full configuration)

- Tensor Cores: 528 (4th Generation)

- Process Technology: 5nm

- Transistor Count: 80 billion

The compute performance remains identical between both GPUs, with up to 9x faster AI training and 30x faster AI inference compared to previous generations for both models.

Power and Thermal Design

H100:

- TDP: 700W (SXM5) / 350W (PCIe)

- Cooling: Air or liquid cooling

H200:

- TDP: 700W (SXM5) / 600W (NVL)

- Cooling: Air, liquid, or hybrid systems

Here's what's remarkable:

Both H100 and H200 sip from the same 700W straw. The H200 isn't just faster; it squeezes more juice—delivering faster throughput with no added burden.

Performance Benchmarks: Where the H200 Shines

The real question every tech leader asks is: "How much faster is it in practice?"

Let's examine the data.

Large Language Model Inference

H200 doubles inference performance compared to H100 when handling LLMs such as Llama2 70B. This isn't just marketing speak—independent benchmarks confirm these gains.

According to real-world testing, MLPerf tests on Llama2-70B show the H200 reaching 31,712 tokens/second, compared to the H100's 21,806 tokens/second, reaching a ~45% improvement.

Memory-Intensive Workloads

The H200 truly excels when dealing with long input sequences and large batch sizes. With long input sequences, TPS gets quadratically worse with input length as the GPU needs to process every previous token before generating new tokens, so the H200 is uniquely well-suited for this specific use case.

For large batch workloads, the 8xH200 cluster offers 47% higher performance in BF16 and 36% higher performance in FP8 compared to H100 systems.

High-Performance Computing

HPC applications achieve up to 1.3x more performance over the H100 NVL, with LLM inference accelerated up to 1.7x when using multi-GPU configurations with NVLink.

When the H200 Doesn't Offer Significant Advantages

It's important to note that not all workloads benefit equally. For some workloads, H200 GPUs performed equivalently to or slightly better than H100 GPUs. These benchmarks represent real-world examples like real-time chat applications, code completion tools, and other short-context low-latency inference workloads.

Alsom Check: https://cyfuture.ai/blog/rent-gpu-in-india

Pricing Analysis: The Cost-Benefit Equation

Now for the million-dollar question—literally.

Purchase Costs

H100 Pricing (2026):

- Standard PCIe 80GB: $25,000 - $30,000

- Premium configurations: $30,000 - $35,000

- Bulk OEM orders: $22,000 - $24,000 per unit

H200 Pricing (2026):

- Standard units: $30,000 - $40,000

- Approximately 20-25% higher costs than H100

Cloud Rental Rates

H100 Cloud Pricing: The H100 was originally priced at around $8 per hour on cloud platforms. However, as supply has increased, the cost has dropped significantly - now ranging from about $2 to $3.50 per hour, with some providers offering rates as low as $1.90.

H200 Cloud Pricing: As of May 2025, on-demand rates span $3.72 to $10.60 per GPU-hour across major cloud providers, with Jarvislabs offering on-demand H200 at $3.80/hr - cheapest single-GPU access.

TCO Considerations

Here's where Cyfuture AI makes the difference:

Cyfuture AI provides flexible GPU cloud solutions that eliminate the massive upfront capital expenditure while offering immediate access to both H100 and H200 GPUs. With Cyfuture's infrastructure, enterprises can scale their AI workloads without the burden of hardware ownership, maintenance, and depreciation costs.

Real-World Use Cases: Which GPU for Which Workload?

Let's get practical.

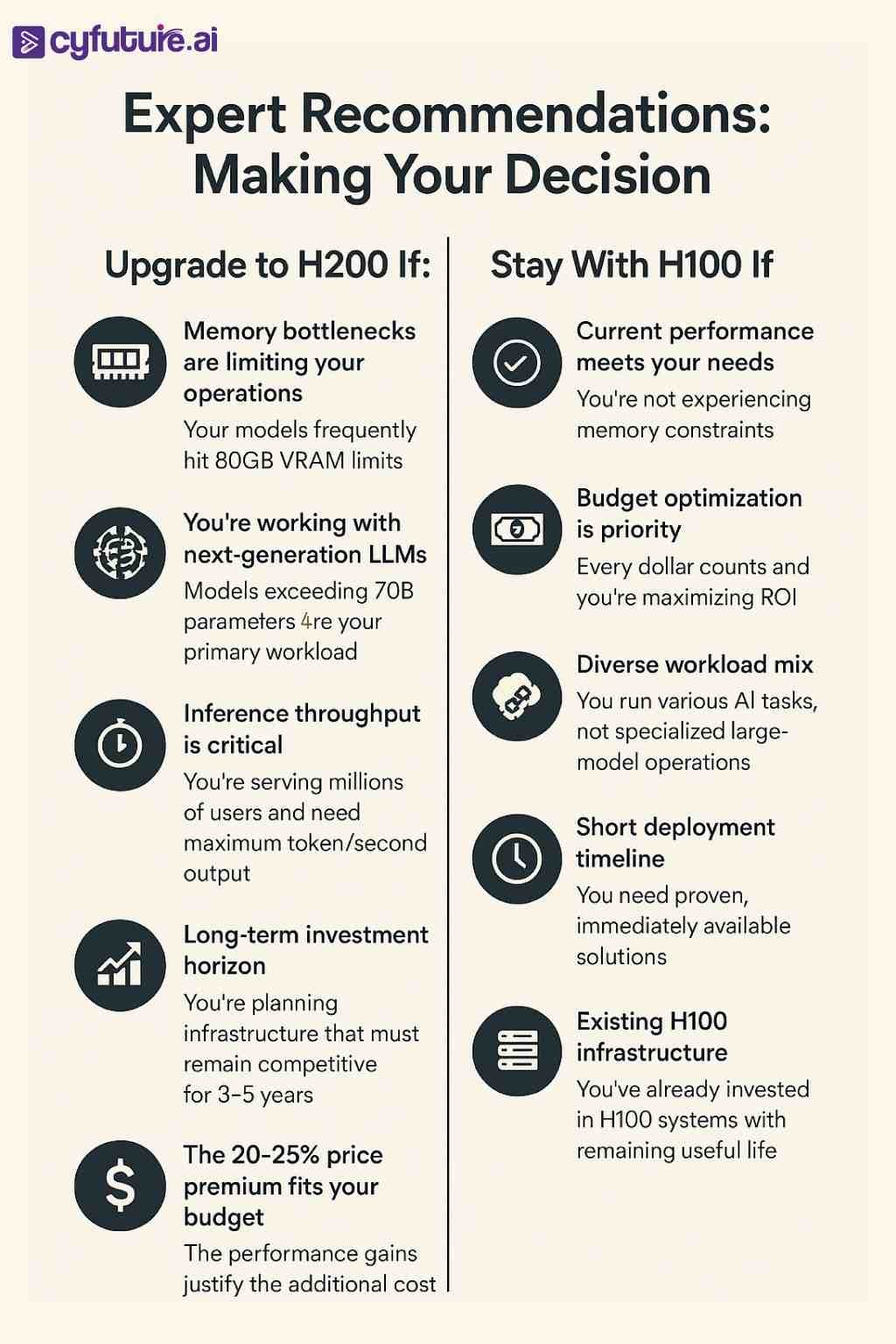

Choose H200 When You're:

1. Training or Serving 100B+ Parameter Models

The enhanced HBM3e memory capabilities of the H200 GPU makes it easier to run inference with much longer input and output sequences with tens of thousands of tokens.

2. Running Long-Context Applications

Examples of real-world applications include summarization (with >256-token output sequences), long-context retrieval, and retrieval-augmented generation.

3. Handling Massive Batch Inference

For latency-insensitive tasks where cost per million tokens matters, the H200's large VRAM allocation enables efficient large-batch processing.

4. Fine-Tuning Foundation Models

Domain-specific fine-tuning enables efficient retraining of base models for fields like finance, healthcare, or legal AI without sharding across GPUs.

Stick With H100 When You're:

1. Running Short-Context Inference

Real-time chat applications, code completion, and other low-latency workloads show minimal performance differences between H100 and H200.

2. Budget-Conscious

If your models run efficiently within 80 GB of memory and you're budget-conscious, the H100 still offers excellent value.

3. Training Mid-Sized Models

Models under 70B parameters typically fit comfortably on H100s without memory constraints.

4. Running Diverse Workload Mixes

For organizations running various AI tasks rather than specialized large-model operations, the H100 provides the best balance of performance and cost.

Infrastructure Compatibility and Migration

One of the biggest concerns enterprises have is: "Can we upgrade without overhauling our entire infrastructure?"

The good news:

Since both GPUs use the same Hopper architecture, most frameworks—like TensorFlow, PyTorch, and JAX—work seamlessly on either.

Software Compatibility

Both GPUs support:

- All major AI frameworks (PyTorch, TensorFlow, JAX)

- NVIDIA's proprietary tools (NeMo, TensorRT)

- Full CUDA compatibility

- Docker containers and Kubernetes clusters

Your existing Docker containers, Kubernetes clusters, or MLOps platforms (like MLflow or Kubeflow) will typically require no major rework.

Form Factor Options

Both GPUs are available in multiple configurations:

- SXM5: Highest performance for dense data center deployments

- PCIe: Flexible integration into standard servers

- NVL: Air-cooled variants for enterprise rack designs

This flexibility ensures that upgrading doesn't necessarily mean replacing your entire infrastructure.

Energy Efficiency and Sustainability

In today's climate-conscious enterprise environment, energy efficiency isn't just about cost—it's about corporate responsibility.

With the introduction of the H200, energy efficiency and TCO reach new levels. This cutting-edge technology offers unparalleled performance, all within the same power profile as the H100.

The H200 provides around 50% power savings on large language model (LLM) inference workloads compared to previous-generation GPUs.

Consider this:

For organizations running 24/7 AI operations, these efficiency gains translate to:

- Lower electricity costs

- Reduced cooling requirements

- Smaller carbon footprint

- Better data center density

Market Availability and Supply Chain Considerations

Unlike the severe shortages that plagued H100 availability in 2023-2024, the supply situation has stabilized.

H100 Availability

NVIDIA reports stable supply levels for H100 and related H200 series GPUs as of mid-2026, with more than 30 cloud providers globally providing immediate access to H100 GPUs.

H200 Availability

The H200 is now widely available through major cloud platforms, including AWS, Azure, Google Cloud, and specialized AI cloud providers like Cyfuture AI.

This improved availability means organizations can access either GPU without months-long waiting periods that characterized earlier years.

Future-Proofing: What About Blackwell (B200)?

Some organizations are wondering whether to wait for NVIDIA's next generation.

The B200 significantly speeds up training, achieving up to 3 times the performance of the H100, but there are important considerations:

- Limited early availability - B200 is currently available from select cloud providers and in limited quantities for enterprise customers

- Higher power requirements - The B200 consumes 1000W, up from the H100's 700W

- Infrastructure changes needed - May require liquid cooling and electrical upgrades

- Premium pricing - Early adopters will pay significantly more

The H200 remains the practical choice for most organizations in 2026, offering production-ready performance with proven reliability.

Read More: https://cyfuture.ai/blog/nvidia-h100-gpu-price-in-india

Cyfuture AI: Your Strategic GPU Cloud Partner

Navigating the complex landscape of GPU infrastructure doesn't have to be overwhelming.

Cyfuture AI offers comprehensive GPU cloud solutions that provide:

Immediate Access: No waiting periods or procurement delays—deploy H100 or H200 instances in minutes

Flexible Pricing: Pay only for what you use, with options ranging from spot instances to reserved capacity

Expert Guidance: Technical consultants who understand your workload requirements and can recommend optimal configurations

Scalability: Seamlessly scale from single GPU instances to multi-node clusters as your needs evolve

Enterprise Support: 24/7 technical support with SLAs that meet enterprise requirements

With Cyfuture AI's infrastructure, you get the performance of cutting-edge GPUs without the capital expenditure, maintenance burden, or depreciation risk.

Accelerate Your AI Innovation With Cyfuture AI

The choice between H200 and H100 isn't just about specifications—it's about aligning your GPU infrastructure with your business objectives and AI ambitions.

For memory-intensive workloads, next-generation LLMs, and applications requiring maximum throughput, the H200's 141 GB of HBM3e memory at 4.8 TB/s bandwidth delivers transformative performance that justifies its premium pricing.

For diverse AI workloads, budget-conscious operations, and proven performance requirements, the H100 continues to offer exceptional value with 9x faster AI training and 30x faster AI inference compared to previous generations.

Both GPUs are available through Cyfuture AI's flexible cloud platform, enabling you to choose the right tool for each specific workload without being locked into a single solution.

The future of AI isn't about choosing one GPU over another—it's about having the flexibility to leverage the right computing resources for each unique challenge.

Frequently Asked Questions (FAQs)

1. What are the main differences between H200 and H100 GPUs?

The H200 GPU offers improved memory bandwidth, larger VRAM capacity, and enhanced efficiency for large-scale AI and HPC workloads compared to the H100.

2. Is the H200 GPU faster than the H100?

Yes. The H200 delivers higher throughput and better performance in training and inference tasks, especially for large language models and data-heavy operations.

3. Should I upgrade from H100 to H200 GPU?

If your workloads involve large AI models, high memory demand, or require maximum efficiency, upgrading to the H200 can deliver significant benefits. For moderate workloads, the H100 still performs excellently.

4. How much VRAM does the H200 GPU have?

The NVIDIA H200 features up to 141GB of HBM3e memory, providing faster data transfer rates compared to the H100’s 80GB of HBM3.

5. What industries benefit most from the H200 GPU upgrade?

Industries in AI research, data analytics, autonomous systems, and cloud computing benefit most due to the H200’s higher efficiency and scalability.

Author Bio:

Meghali is a tech-savvy content writer with expertise in AI, Cloud Computing, App Development, and Emerging Technologies. She excels at translating complex technical concepts into clear, engaging, and actionable content for developers, businesses, and tech enthusiasts. Meghali is passionate about helping readers stay informed and make the most of cutting-edge digital solution